Cluster API is a Kubernetes sub-project focused on providing declarative APIs and tooling to simplify provisioning, upgrading, and operating multiple Kubernetes clusters.

Kubernetes is a complex system that relies on several components being configured correctly to have a working cluster. Recognizing this as a potential stumbling block for users, the community focused on simplifying the bootstrapping process. Today, over 100 Kubernetes distributions and installers have been created, each with different default configurations for clusters and supported infrastructure providers. SIG Cluster Lifecycle saw a need for a single tool to address a set of common overlapping installation concerns and started kubeadm.

However, while kubeadm and other bootstrap providers reduce installation complexity, they don’t address how to manage a cluster day-to-day or a Kubernetes environment long term. You are still faced with several questions when setting up a production environment, including:

SIG Cluster Lifecycle began the Cluster API project as a way to address these gaps by building declarative, Kubernetes-style APIs, that automate cluster creation, configuration, and management. Using this model, Cluster API can also be extended to support any infrastructure provider (AWS, Azure, vSphere, etc.) or bootstrap provider (kubeadm is default) you need. See the growing list of available providers .

Cluster API is developed in the open, and is constantly being improved by our users, contributors, and maintainers. It is because of you that we are able to automate cluster lifecycle management for the community. Join us!

If you have questions or want to get the latest project news, you can connect with us in the following ways:

In this tutorial we’ll cover the basics of how to use Cluster API to create one or more Kubernetes clusters.

There are two major quickstart paths: Using clusterctl or the Cluster API Operator.

The clusterctl command line interface is specifically designed for providing a simple “day 1 experience” and a quick start with Cluster API. It automates fetching the YAML files defining provider components and installing them.

Additionally it encodes a set of best practices in managing providers, that helps the user in avoiding mis-configurations or in managing day 2 operations such as upgrades.

The Cluster API Operator is a Kubernetes Operator built on top of clusterctl and designed to empower cluster administrators to handle the lifecycle of Cluster API providers within a management cluster using a declarative approach. It aims to improve user experience in deploying and managing Cluster API, making it easier to handle day-to-day tasks and automate workflows with GitOps. Visit the CAPI Operator quickstart if you want to experiment with this tool.

Cluster API requires an existing Kubernetes cluster accessible via kubectl. During the installation process the

Kubernetes cluster will be transformed into a management cluster by installing the Cluster API provider components , so it

is recommended to keep it separated from any application workload.

It is a common practice to create a temporary, local bootstrap cluster which is then used to provision

a target management cluster on the selected infrastructure provider .

The clusterctl CLI tool handles the lifecycle of a Cluster API management cluster.

Now that we’ve got clusterctl installed and all the prerequisites in place, let’s transform the Kubernetes cluster

into a management cluster by using clusterctl init.

The command accepts as input a list of providers to install; when executed for the first time, clusterctl init

automatically adds to the list the cluster-api core provider, and if unspecified, it also adds the kubeadm bootstrap

and kubeadm control-plane providers.

Feature gates can be enabled by exporting environment variables before executing clusterctl init.

For example, the ClusterTopology feature, which is required to enable support for managed topologies and ClusterClass,

can be enabled via:

Depending on the infrastructure provider you are planning to use, some additional prerequisites should be satisfied

before getting started with Cluster API. See below for the expected settings for common providers.

Once the management cluster is ready, you can create your first workload cluster.

Depending on the infrastructure provider you are planning to use, some additional prerequisites should be satisfied

before configuring a cluster with Cluster API. Instructions are provided for common providers below.

For the purpose of this tutorial, we’ll name our cluster capi-quickstart.

The file can be eventually modified using your editor of choice.

When ready, run the following command to apply the cluster manifest.

The cluster will now start provisioning. You can check status with:

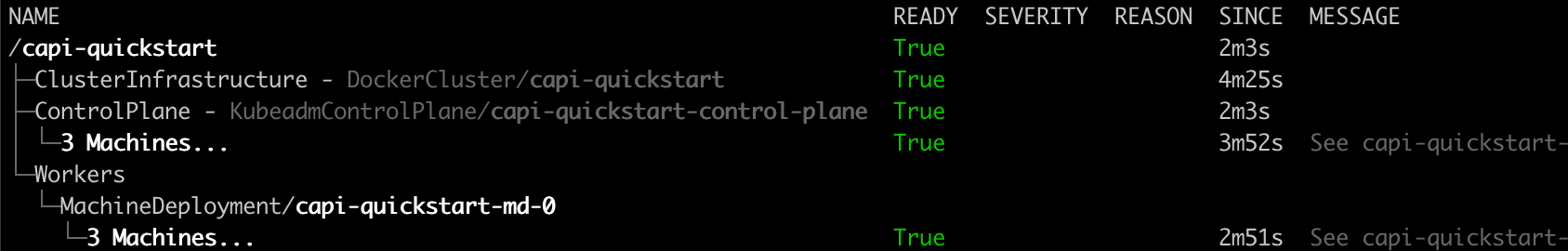

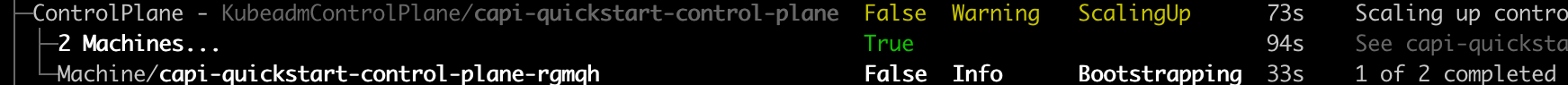

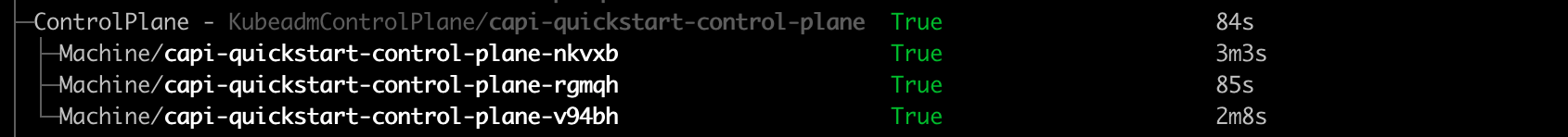

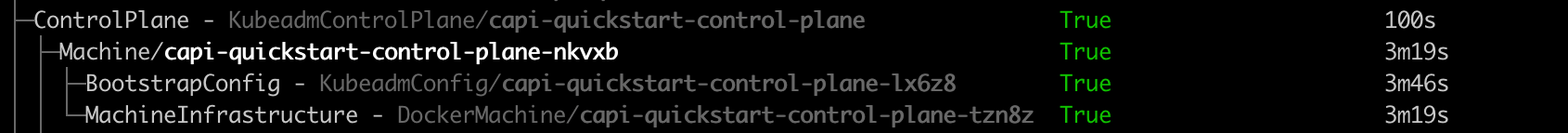

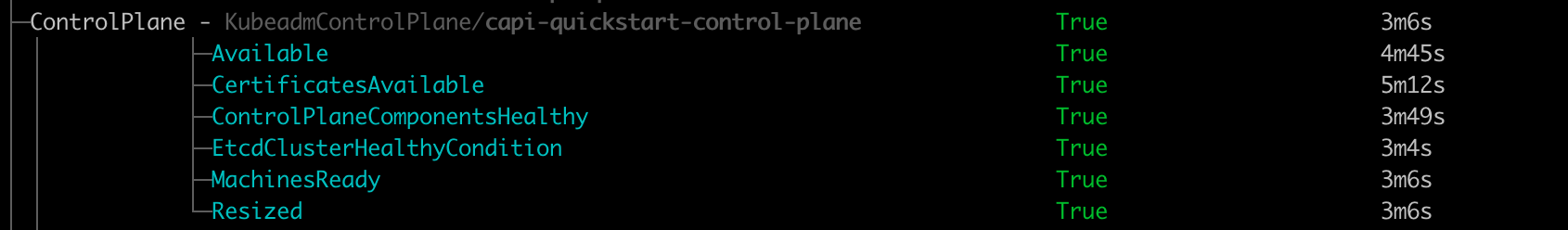

You can also get an “at glance” view of the cluster and its resources by running:

Calico is used here as an example.

Delete workload cluster.

This section provides a quickstart guide for using the Cluster API Operator to create a Kubernetes cluster.

To use the clusterctl quickstart path, visit this quickstart guide .

This is a quickstart guide for getting Cluster API Operator up and running on your Kubernetes cluster.

For more detailed information, please refer to the full documentation.

Instead of using environment variables as clusterctl does, Cluster API Operator uses Kubernetes secrets to store credentials for cloud providers. Refer to provider documentation on which credentials are required.

This example uses AWS provider, but the same approach can be used for other providers.

Deploy Cluster API components with docker provider using a single command during operator installation

Deploy Cluster API AWS provider with specific version, custom manager options and flags:

Similar to how you can use StatefulSets or Deployments in Kubernetes to manage a group of Pods, in Cluster API you can use custom resources like KubeadmControlPlane (a control plane implementation) to manage a set of control plane Machines, or you can use MachineDeployments to manage a group of worker Machines, each one of them representing a host server and the corresponding Kubernetes Node.

Extensibility is at the core of Cluster API and Cluster API providers like Cluster API provider VSphere, AWS, GCP etc. can be used

to deploy Cluster API managed Clusters to your preferred infrastructure, as well as to configure many other parts of the system.

A Kubernetes cluster where Cluster API and one or more Cluster API providers run, and that can be used to manage the lifecycle of your Kubernetes Cluster via a set of custom resources such as Cluster or Machines .

A “Cluster” is a custom resource that represent a Kubernetes cluster whose lifecycle is managed by Cluster API, usually also referred to as workload cluster.

Common properties such as network CIDRs are modeled as fields on the Cluster’s spec. Any information that is provider-specific is part of the custom resources

referenced via infrastructureRef or controlPlaneRef and is not portable between different providers.

In most recent versions of Cluster API, the Cluster object can be used as a single point of control for the entire cluster.

See ClusterClass

A “Machine” is a custom resource providing the declarative spec for infrastructure hosting a Kubernetes Node (for example, a VM).

Common fields such as the Kubernetes version are modeled as fields on the Machine’s spec. Any information that is provider-specific is part of the custom resources

referenced via infrastructureRef or bootstrap.configRef and is not portable between different providers.

If a new Machine object is created, a provider-specific controller will provision and install a new host to register as a new Node matching the Machine spec. If a Machine object is deleted, its underlying infrastructure and corresponding Node will be deleted.

Like for Pods in Kubernetes, also for Machines in Cluster API it is more convenient to not manage single Machines directly. Instead you should use resources like KubeadmControlPlane (a control plane implementation), MachineDeployments or MachinePools to manage a group of Machines.

From the perspective of Cluster API, all Machines are immutable: once they are created, they are never updated (except for labels, annotations and status), only deleted.

For this reason, MachineDeployments are preferable. MachineDeployments handle changes to machines by replacing them, in the same way core Deployments handle changes to Pod specifications.

Over time several improvement have been applied to Cluster API in oder to perform machine rollout only when necessary and

for minimizing risks and impact of this operation on users workloads.

Starting from Cluster API v1.12, users can intentionally trade off some of the benefits that they get of Machine immutability by

using Cluster API extensions points to add the capability to perform in-place updates under well-defined circumstances.

Notably, the Cluster API user experience will remain the same no matter of the in-place update feature is enabled

or not, because ultimately users should care ONLY about the desired state.

Cluster API is responsible to choose the best strategy to achieve desired state, and with the introduction of

update extensions, Cluster API is expanding the set of tools that can be used to achieve the desired state.

A component responsible for the provisioning of infrastructure/computational resources required by the Cluster or by Machines (e.g. VMs, networking, etc.).

For example, cloud Infrastructure Providers include AWS, Azure, and Google, and bare metal Infrastructure Providers include VMware, MAAS, and metal3.io.

When there is more than one way to obtain resources from the same Infrastructure Provider (such as AWS offering both EC2 and EKS), each way is referred to as a variant.

A component responsible for the provisioning and for the management of the control plane of your Kubernetes Cluster, like e.g. the KubeadmControlPlane provider.

Control plane providers can take different approach on how to manage the control plane;

A component responsible for turning a server into a Kubernetes node as well as for:

Boostrap provider achieve this goal by generating BootstrapData, which contains the Machine or Node role-specific initialization data (usually cloud-init). The bootstrap data is used by the Infrastructure Provider to bootstrap a Machine into a Node.

The KubeadmControlPlane is a custom resource that is provided by the Kubeadm provider, and that allows to manage a set of Machines hosting control plane Nodes created with kubeadm.

Other control plane providers implement similar resources as well.

A MachineDeployment provides declarative updates for Machines and MachineSets.

A MachinePool is a declarative spec for a group of Machines. It is similar to a MachineDeployment, but is specific to a particular Infrastructure Provider. For more information, please check out MachinePool .

A MachineSet’s purpose is to maintain a stable set of Machines running at any given time.

A MachineHealthCheck defines the conditions when a Node should be considered missing or unhealthy.

If the Node matches these unhealthy conditions for a given user-configured time, the MachineHealthCheck initiates remediation of the Node. Remediation of Nodes is performed by replacing the corresponding Machine.

MachineHealthChecks will only remediate Nodes if they are owned by a MachineSet. This ensures that the Kubernetes cluster does not lose capacity, since the MachineSet will create a new Machine to replace the failed Machine.

This document would hopefully provide valuable context for all users, contributors and companies investing in this project,

as well as act as compass for all reviewers and maintainers currently working on it.

The Cluster API community is the foundation for this project’s past, present and future.

The project will continue to encourage and praise active participation and contribution.

The Cluster API community is an active part of Kubernetes SIG Cluster Lifecycle, of the broader Kubernetes community

and of the CNCF.

CNCF provides the core values this project recognizes and contributes to.

The Kubernetes community provides most of the practices and policies this project abides to or is inspired by.

Nowadays, like at the beginning of the project, some concepts from the above statement deserve further clarification.

The Cluster API project motto is “Kubernetes all the way down”, and this boils down to two elements.

The target state of a cluster can be defined using Kubernetes declarative APIs.

The project also implements controllers – Kubernetes reconcile loops – ensuring that desired and current state of the

cluster will remain consistent over time.

The combination of those elements, declarative APIs and controllers, defines “how” this project aims to make Kubernetes

and Cluster API a stable, reliable and consistent platform that just works to enable higher order business value

supported by cloud-native applications.

Kubernetes Cluster lifecycle management is a complex problem space, especially if you consider doing this across so

many different types of infrastructures.

Hiding this complexity behind a simple declarative API is “why” the Cluster API project ultimately exists.

The project is strongly committed to continue its quest in defining a set of common API primitives working consistently

across all infrastructures (one API to rule them all).

Working towards graduating our API to v1 will be the next step in this journey.

While doing so, the project should be inspired by Tim Hockin’s talk, and continue to move forward without increasing

operational and conceptual complexity for Cluster API’s users.

Like Kubernetes, also the Cluster API project claims the right to remain unfinished, because there is still a strong,

foundational need to continuously evolve, improve and adapt to the changing needs of Cluster API’s users and to the

growing Cloud Native ecosystem.

What is important to notice, is that being a project that is “continuously evolving” is not in contrast with another

request from Cluster API’s users, which is about the project being stable, as expected by a system that has “crossed the chasm”.

Those two requests from Cluster API’s users are two sides of the same coin, a reminder that Cluster API must

“evolve responsibly” by ensuring upgrade paths and avoiding (or at least minimizing) disruptions for users.

The Cluster API project will continue to “evolve responsibly” by abiding to the same guarantees that Kubernetes offers

for its own APIs, but also ensuring a continuous and obsessive focus on CI signal, test coverage and test flakes.

Also ensuring a predictable release calendar, clear support windows and compatibility matrix for each release

is a crucial part of this effort to “evolve responsibly”.

There is a finite amount of complexity that a project can absorb over a certain amount of time;

when the complexity budget runs out, bad things happen, quality decreases, we can’t fix bugs timely etc.

Since the beginning of the Cluster API project, its maintainers intuitively handled the complexity budget by following

this approach:

“We’ve got to say no to things today, so we can afford to do interesting things tomorrow”.

This is something that is never done lightly, and it is always the result of an open discussion considering the status

of the codebase, the status of the project CI signal, the complexity of the new feature etc. .

Being very pragmatic, also the resources committed to implement and to maintain a feature over time must be considered

when doing such an evaluation, because a model where everything falls on the shoulders of a small set of core

maintainers is not sustainable.

On the other side of this coin, Cluster API maintainer’s also claim the right to reconsider new ideas or ideas

previously put on hold whenever there are the conditions and the required community consensus to work on it.

Probably the most well-known case of this is about Cluster API maintainers repeatedly deferring on change requests

about nodes mutability in the initial phases of the project, while starting to embrace some mutable behavior in recent releases.

The Cluster API project is committed to keep working with the broader CAPI community – all the Cluster API providers –

as a single team in order to continuously improve and expand the capability of this solution.

As we learned the hard way, the extensibility model implemented by CAPI to support so many providers requires a

complementary effort to continuously explore new ways to offer a cohesive solution, not a bag of parts.

It is important to continue and renew efforts to make it easier to bootstrap and operate a system composed of many

components, to ensure consistent APIs and behaviors, to ensure quality across the board.

This effort lays its foundation in all the provider maintainers being committed to this goal, while the Cluster API project

will be the venue where common guidelines are discussed and documented, as well as the place of choice where common

components or utilities are developed and hosted.

This section provides details for some of the operations that need to be performed

when managing clusters.

This section details some tasks related to certificate management.

Cluster API expects certificates and keys used for bootstrapping to follow the below convention. CABPK generates new certificates using this convention if they do not already exist.

When using Kubeadm Control Plane provider (KCP) it is possible to configure automatic certificate rotations. KCP does this by triggering a rollout when the certificates on the control plane machines are about to expire.

If configured, the certificate rollout feature is available for all new and existing control plane machines.

This section provides details about bootstrap providers.

Cluster API bootstrap provider Kubeadm (CABPK) is a component responsible for generating a cloud-init script to

turn a Machine into a Kubernetes Node. This implementation uses kubeadm

for Kubernetes bootstrap.

CABPK supports multiple control plane machines initing at the same time.

The generation of cloud-init scripts of different machines is orchestrated in order to ensure a cluster

bootstrap process that will be compliant with the correct Kubeadm init/join sequence. More in detail:

CAPBK has several ways to configure kubelet.

This method is easy to replace the whole kubelet configuration generated by kubeadm, but it is not easy to replace only a part of the kubelet configuration.

This method is useful when you want to set kubelet flags that are not configurable via the KubeletConfiguration file, however, it is not recommended to use this method to set flags that are configurable via the KubeletConfiguration file.

This method is useful when you want to change the kubelet configuration file partially on specific nodes. For example, you can deploy a partially patched kubelet configuration file on specific nodes based on the default configuration used for kubeadm init or kubeadm join.

Cluster API bootstrap provider MicroK8s (CABPM) is a component responsible for generating a cloud-init script to turn a Machine into a Kubernetes Node. This implementation uses MicroK8s for Kubernetes bootstrap.

A control plane manifest section includes the Kubernetes version, the replica number as well as the MicroK8sConfig:

The main purpose of the MicroK8s bootstrap provider is to translate the users needs to a number of cloud-init files applicable for each type of cluster nodes. There are three types of cloud-inits:

The cloud-init scripts are saved as secrets that then the infrastructure provider uses during the machine creation. For more information on cloud-init options, see cloud config examples .

In addition, you must always upgrade between Kubernetes minor versions in sequence, e.g. if you need to upgrade from

Kubernetes v1.17 to v1.19, you must first upgrade to v1.18.

For kubeadm based clusters, infrastructure providers require a “machine image” containing pre-installed, matching

versions of kubeadm and kubelet, ensure that relevant infrastructure machine templates reference the appropriate

image for the Kubernetes version.

The high level steps to fully upgrading a cluster are to first upgrade the control plane and then upgrade

the worker machines.

The next step will trigger a rolling update of the control plane using the new values found in the new MachineTemplate.

Note that this field can only be used for triggering a rollout, not for delaying one. Specifically,

a rollout can also happen before the time specified in spec.rollout.after if any changes are made to

the spec before that time.

Upgrades are not limited to just the control plane. This section is not related to Kubeadm control plane specifically,

but is the final step in fully upgrading a Cluster API managed cluster.

Cluster API Bootstrap Provider Kubeadm supports using an external etcd cluster for your workload Kubernetes clusters.

Before getting started you should be aware of the expectations that come with using an external etcd cluster.

To use this, you will need to create an etcd cluster and generate an apiserver-etcd-client certificate and private key. This behaviour can be tested using kubeadmetcdadm

CA certificates are required to setup etcd cluster. If you already have a CA then the CA’s crt and key must be copied to /etc/kubernetes/pki/etcd/ca.crt and /etc/kubernetes/pki/etcd/ca.key.

This certificate and private key are used to sign etcd server and peer certificates as well as other client certificates (like the apiserver-etcd-client certificate or the etcd-healthcheck-client certificate). More information on how to setup external etcd with kubeadm can be found here .

Once the etcd cluster is setup, you will need the following files from the etcd cluster:

You’ll use these files to create the necessary Secrets on the management cluster (see the “Creating the required Secrets” section).

You’ll use these files in the next section to create the necessary Secrets on the management cluster.

Regardless of the method used to bootstrap the etcd cluster, you will need to use the certificates copied from the etcd cluster to create some Kubernetes Secrets on the management cluster.

First, you will need to create a Secret containing the API server etcd client certificate and key. This command assumes the certificate and private key are in the current directory; adjust your command accordingly if they are not:

Next, create a Secret for the etcd cluster’s CA certificate. The kubectl create secret tls command requires both a certificate and a key, but the key isn’t needed by CAPI. Instead, use the kubectl create secret generic command, and note that the file containing the CA certificate must be named tls.crt:

Alternatively you can base64 encode the files and put them in two secrets. The secrets must be formatted as follows and the cert material must be base64 encoded:

Once the Secrets are in place on the management cluster, the rest of the process leverages standard kubeadm configuration. Configure your ClusterConfiguration for the workload cluster as follows:

Create your workload cluster as normal. The new workload cluster should use the configured external etcd nodes instead of creating co-located etcd Pods on the control plane nodes.

In the overlay directories, the “base” (unmodified) Cluster API configuration (perhaps generated using

clusterctl generate cluster) would be referenced as a resource in kustomization.yaml using ../../base.

In general, it’s recommended to upgrade to the latest version of Cluster API to take advantage of bug fixes, new

features and improvements.

If moving between different API versions, there may be additional tasks that you need to complete. See below for

detailed instructions.

Ensure that the version of Cluster API is compatible with the Kubernetes version of the management cluster.

This section provides details about control plane providers.

Using the Kubeadm control plane type to manage a control plane provides several ways to upgrade control plane machines.

KCP will generate and manage the admin Kubeconfig for clusters. The client certificate for the admin user is created

with a valid lifespan of a year, and will be automatically regenerated when the cluster is reconciled and has less than

6 months of validity remaining.

We don’t suggest running workloads on control planes, and highly encourage avoiding it unless absolutely necessary.

However, in the case the user wants to run non-control plane workloads on control plane machines they

are ultimately responsible for ensuring the proper functioning of those workloads, given that KCP is not

aware of the specific requirements for each type of workload (e.g. preserving quorum, shutdown procedures etc.).

In order to do so, the user could leverage on the same assumption that applies to all the

Cluster API Machines:

Changes to the following fields of KubeadmControlPlane are propagated in-place to the Machines and do not trigger a full rollout:

Changes to the following fields of KubeadmControlPlane are propagated in-place to the InfrastructureMachine and KubeadmConfig:

Note: Changes to these fields will not be propagated to Machines, InfraMachines and KubeadmConfigs that are marked for deletion (example: because of scale down).

Cluster API MicroK8s control plane provider (CACPM) is a component responsible for managing the control plane of the provisioned clusters. This implementation uses MicroK8s for cluster provisioning and management.

Currently the CACPM does not expose any functionality. It serves however the following purposes:

The correct process for modifying an infrastructure machine template is as follows:

Once the new infrastructure machine template has been persisted, users may modify

the object that was referencing the infrastructure machine template. For example,

to modify the infrastructure machine template for the KubeadmControlPlane object,

users would modify the spec.infrastructureTemplate.name field. For a MachineDeployment, users would need to modify the spec.template.spec.infrastructureRef.name

field and the controller would orchestrate the upgrade by managing MachineSets pointing to the new and old references. In the case of a MachineSet with no MachineDeployment owner, if its template reference is changed, it will only affect upcoming Machines.

Once the new bootstrap template has been persisted, users may modify

the object that was referencing the bootstrap template. For example,

to modify the bootstrap template for the MachineDeployment object,

users would modify the spec.template.spec.bootstrap.configRef.name field.

The name field should be updated to point to the newly-modified

bootstrap template. This will trigger a rolling update.

Follow the quickstart setup guide for your provider but ensure that CAAPH is installed via including the addon=helm with either:

This will automatically install ArgoCD in the ArgoCD namespace and the guestbook application into the guestbook namespace. Adding or labeling additional clusters with argoCDChart: enabled and guestbook: enabled will automatically install the ArgoCD agent and the guestbook application and there is no need to create additional CAAPH HelmChartProxy entries.

The ArgoCD console can be viewed by connecting to the workload cluster and then doing the following:

The Guestbook application deployment can be seen once logged into the ArgoCD console. Since the GitOps agent points to the git repository, any changes to the repository will automatically update the workload cluster. The git repository could be configured to utilize the App of Apps pattern to install all platform requirements for the cluster. The App of Apps pattern is a single application that installs all other applications and configurations for the cluster.

This section details some tasks related to automated Machine management.

This section applies only to worker Machines. You can add or remove compute capacity for your cluster workloads by creating or removing Machines. A Machine expresses intent to have a Node with a defined form factor.

Machines can be owned by scalable resources i.e. MachineSet and MachineDeployments.

You can scale MachineSets and MachineDeployments in or out by expressing intent via .spec.replicas or updating the scale subresource e.g kubectl scale machinedeployment foo --replicas=5.

When you delete a Machine directly or by scaling down, the same process takes place in the same order:

This section applies only to worker Machines. Cluster Autoscaler is a tool that automatically adjusts the size of the Kubernetes cluster based

on the utilization of Pods and Nodes in your cluster. For more general information about the

Cluster Autoscaler, please see the

project documentation .

The following instructions are a reproduction of the Cluster API provider specific documentation

from the Autoscaler project documentation .

The cluster-api provider requires Kubernetes v1.16 or greater to run the

v1alpha3 version of the API.

To enable the Cluster API provider, you must first specify it in the command

line arguments to the cluster autoscaler binary. For example:

Please note, this example only shows the cloud provider options, you will

most likely need other command line flags. For more information you can invoke

cluster-autoscaler --help to see a full list of options.

You must configure node group auto discovery to inform cluster autoscaler which cluster in which to find for scalable node groups.

Limiting cluster autoscaler to only match against resources in the blue namespace

Limiting cluster autoscaler to only match against resources belonging to Cluster test1

Limiting cluster autoscaler to only match against resources matching the provided labels

These can be mixed and matched in any combination, for example to only match resources

in the staging namespace, belonging to the purple cluster, with the label owner=jim:

You will also need to provide the path to the kubeconfig(s) for the management

and workload cluster you wish cluster-autoscaler to run against. To specify the

kubeconfig path for the workload cluster to monitor, use the --kubeconfig

option and supply the path to the kubeconfig. If the --kubeconfig option is

not specified, cluster-autoscaler will attempt to use an in-cluster configuration.

To specify the kubeconfig path for the management cluster to monitor, use the

--cloud-config option and supply the path to the kubeconfig. If the

--cloud-config option is not specified it will fall back to using the kubeconfig

that was provided with the --kubeconfig option.

Use in-cluster config for workload cluster, specify kubeconfig for management cluster:

Use in-cluster config for management cluster, specify kubeconfig for workload cluster:

To enable the automatic scaling of components in your cluster-api managed

cloud there are a few annotations you need to provide. These annotations

must be applied to either MachineSet , MachineDeployment , or MachinePool

resources depending on the type of cluster-api mechanism that you are using.

The Cluster API community has defined an opt-in method for infrastructure

providers to enable scaling from zero-sized node groups in the

Opt-in Autoscaling from Zero enhancement .

As defined in the enhancement, each provider may add support for scaling from

zero to their provider, but they are not required to do so. If you are expecting

built-in support for scaling from zero, please check with the Cluster API

infrastructure providers that you are using.

If your Cluster API provider does not have support for scaling from zero, you

may still use this feature through the capacity annotations. You may add these

annotations to your MachineDeployments, or MachineSets if you are not using

MachineDeployments (it is not needed on both), to instruct the cluster

autoscaler about the sizing of the nodes in the node group. At the minimum,

you must specify the CPU and memory annotations, these annotations should

match the expected capacity of the nodes created from the infrastructure.

For example, if my MachineDeployment will create nodes that have “16000m” CPU,

“128G” memory, “100Gi” ephemeral disk storage, 2 NVidia GPUs, and can support

200 max pods, the following annotations will instruct the autoscaler how to

expand the node group from zero replicas:

If you are using the opt-in support for scaling from zero as defined by the

Cluster API infrastructure provider, you will need to add the infrastructure

machine template types to your role permissions for the service account

associated with the cluster autoscaler deployment. The service account will

need permission to get, list, and watch the infrastructure machine

templates for your infrastructure provider.

Taints for scale from zero can be configured in two ways, listed below in

order of precedence (highest first):

To provide CSI driver information for scale from zero, the optional

capacity annotation may be supplied as a comma separated list of driver name

and volume limit key/value pairs, as demonstrated in the example below:

Custom autoscaling options per node group (MachineDeployment/MachinePool/MachineSet) can be specified as annoations with a common prefix:

Users of single-arch non-amd64 clusters who are using scale from zero

support should also set the CAPI_SCALE_ZERO_DEFAULT_ARCH environment variable

to set the architecture of the nodes they want to default the node group templates to.

The autoscaler will default to amd64 if it is not set, and the node

group templates may not match the nodes’ architecture, specifically when

the workload triggering the scale-up uses a node affinity predicate checking

for the node’s architecture.

By default all Kubernetes resources consumed by the Cluster API provider will

use the group cluster.x-k8s.io, with a dynamically acquired version. In

some situations, such as testing or prototyping, you may wish to change this

group variable. For these situations you may use the environment variable

CAPI_GROUP to change the group that the provider will use.

When determining the group version for the Cluster API types, by default the autoscaler

will look for the latest version of the group. For example, if MachineDeployments

exist in the cluster.x-k8s.io group at versions v1alpha1 and v1beta1, the

autoscaler will choose v1beta1.

In some cases it may be desirable to specify which version of the API the cluster

autoscaler should use. This can be useful in debugging scenarios, or in situations

where you have deployed multiple API versions and wish to ensure that the autoscaler

uses a specific version.

If the replica field is unset in the Cluster definition Autoscaling can be enabled as described above

As with other providers, if the device plugin on nodes that provides GPU

resources takes some time to advertise the GPU resource to the cluster, this

may cause Cluster Autoscaler to unnecessarily scale out multiple times.

The Cluster Autoscaler feature to enable balancing similar node groups

(activated with the --balance-similar-node-groups flag) is a powerful and

popular feature. When enabled, the Cluster Autoscaler will attempt to create

new nodes by adding them in a manner that balances the creation between

similar node groups. With Cluster API, these node groups correspond directly

to the scalable resources associated (usually MachineDeployments and MachineSets)

with the nodes in question. In order for the nodes of these scalable resources

to be considered similar by the Cluster Autoscaler, they must have the same

capacity, labels, and taints for the nodes which will be created from them.

To help assist the Cluster Autoscaler in determining which node groups are

similar, the command line flags --balancing-ignore-label and

--balancing-label are provided. For an expanded discussion about balancing

similar node groups and the options which are available, please see the

Cluster Autoscaler FAQ .

Because Cluster API can address many different cloud providers, it is important

to configure the balancing labels to ignore provider-specific labels which

are used for carrying zonal information on Kubernetes nodes. The Cluster

Autoscaler implementation for Cluster API does not assume any labels (aside from

the well-known Kubernetes labels )

to be ignored when running. Users must configure their Cluster Autoscaler deployment

to ignore labels which might be different between nodes, but which do not

otherwise affect node behavior or size (for example when two MachineDeployments

are the same except for their deployment zones). The Cluster API community has

decided not to carry cloud provider specific labels in the Cluster Autoscaler

to reduce the possibility for labels to clash between providers. Additionally,

the community has agreed to promote documentation and the use of the --balancing-ignore-label

flag as the preferred method of deployment to reduce the extended need for

maintenance on the Cluster Autoscaler when new providers are added or updated.

For further context around this decision, please see the

Cluster API Deep Dive into Cluster Autoscaler Node Group Balancing discussion from 2022-09-12 .

The following table shows some of the most common labels used by cloud providers

to designate regional or zonal information on Kubernetes nodes. It is shared

here as a reference for users who might be deploying on these infrastructures.

A MachineHealthCheck is a resource within the Cluster API which allows users to define conditions under which Machines within a Cluster should be considered unhealthy.

A MachineHealthCheck is defined on a management cluster and scoped to a particular workload cluster.

When defining a MachineHealthCheck, users specify a timeout for each of the conditions that they define to check on the Machine’s Node.

If any of these conditions are met for the duration of the timeout, the Machine will be remediated.

By default, the action of remediating a Machine should trigger a new Machine to be created to replace the failed one, but providers are allowed to plug in more sophisticated external remediation solutions.

Use the following example as a basis for creating a MachineHealthCheck for worker nodes:

Use this example as the basis for defining a MachineHealthCheck for control plane nodes managed via

the KubeadmControlPlane:

KubeadmControlPlane allows to control how remediation happen by defining an optional remediationStrategy;

this feature can be used for preventing unnecessary load on infrastructure provider e.g. in case of quota problems,or for allowing the infrastructure provider to stabilize in case of temporary problems.

To ensure that MachineHealthChecks do not perform excessive remediation of Machines,

short-circuiting is implemented to prevent further remediation via the remediation.triggerIf field within the MachineHealthCheck spec.

These values are independent of how many Machines are being checked by the MachineHealthCheck.

Note, when the percentage is not a whole number, the allowed number is rounded down.

Note, the above example had 10 machines as sample set. But, this would work the same way for any other number.

This is useful for dynamically scaling clusters where the number of machines keep changing frequently.

There are scenarios where remediation for a machine may be undesirable (eg. during cluster migration using clusterctl move). For such cases, MachineHealthCheck skips marking a Machine for remediation if:

Before deploying a MachineHealthCheck, please familiarise yourself with the following limitations and caveats:

This page describes how Cluster API deletes Machines.

Note: There are cases where Node drain, wait for volume detach and Node deletion is skipped. For these please take a look at the

implementation of the isDeleteNodeAllowed function

This section describes details of the Node drain process in Cluster API. Cluster API implements Node drain aligned

with kubectl drain. One major difference is that the Cluster API controller does not actively wait during Reconcile

until all Pods are drained from the Node. Instead it continuously evicts Pods and requeues after 20s until all relevant

Pods have been drained from the Node or until the Machine.spec.nodeDrainTimeout is reached (if configured).

Per default all Pods are drained at the same time. But with MachineDrainRules it’s also possible to define a drain order

for Pods with behavior Drain (Pods with WaitCompleted have a hard-coded order of 0). The Machine controller will drain

Pods in batches based on their order (from highest to lowest order).

To determine which Pods are blocking the drain and why you can take a look at the DrainingSucceeded condition on the Machine, e.g.:

If this doesn’t happen, please take a closer at the logs to determine which Pods still have to be evicted or haven’t gone away yet

(i.e. deletionTimestamp is set but the Pod objects still exist).

Users can enable/disable features by setting OS environment variables before running clusterctl init, e.g.:

As an alternative to environment variables, it is also possible to set variables in the clusterctl config file located at $XDG_CONFIG_HOME/cluster-api/clusterctl.yaml, e.g.:

In case a variable is defined in both the config file and as an OS environment variable, the environment variable takes precedence.

For more information on how to set variables for clusterctl, see clusterctl Configuration File

One way is to set experimental variables on the clusterctl config file. For CAPI, these configs are under ./test/e2e/config/... such as docker.yaml:

Another way is to set them as environmental variables before running e2e tests.

To enable/disable features on existing management clusters, users can edit the corresponding controller manager

deployments, which will then trigger a restart with the requested features. E.g. for the CAPI controller manager

deployment:

Following controller manager deployments have to be edited in order to enable/disable their respective experimental features:

Cluster API (CAPI) manages Kubernetes worker nodes primarily through Machine, MachineSet, and MachineDeployment objects. These primitives manage nodes individually (Machines), and have served well across a wide variety of providers.

However, many infrastructure providers already offer first-class abstractions for groups of compute instances (AWS: Auto Scaling Groups (ASG), Azure: Virtual Machine Scale Sets (VMSS), or GCP: Managed Instance Groups (MIG)). These primitives natively support scaling, rolling upgrades, and health management.

MachinePool brings these provider features into Cluster API by introducing a higher-level abstraction for managing a group of machines as a single unit.

A MachinePool is a Cluster API resource representing a group of worker nodes. Instead of reconciling each machine individually, CAPI delegates lifecycle management to the infrastructure provider.

The MachinePool controller coordinates between the Cluster API core and provider-specific implementations:

Most cloud providers already manage scaling, instance replacement, and health monitoring at the group level. MachinePool lets CAPI delegate lifecycle operations instead of duplicating that logic.

This provides more predictable, cloud-native semantics compared to reconciling many individual Machine objects.

MachinePool integrates with the Cluster Autoscaler in the same way that MachineDeployments do. In practice, the autoscaler treats a MachinePool as a node group, enabling scale-up and scale-down decisions based on cluster load.

Both MachineDeployment and MachinePool are valid options for managing worker nodes in Cluster API. The right choice depends on your infrastructure provider’s capabilities and your operational requirements.

Starting from Cluster API v1.7, MachinePool is enabled by default. No additional configuration is needed.

The following Cluster API infrastructure providers have implemented support for MachinePools:

When a MachineSet creates machines under certain circumstances, the operation fails or leads to a new machine that will be deleted and recreated in a short timeframe,

leading to unwanted Machine churn. Some of these circumstances include, but not limited to, creating a new Machine when Kubernetes version skew could be violated or

joining a Machine when the Control Plane is upgrading leading to failure because of mixed kube-apiserver version or due to the cluster load balancer delays in adapting

to the changes.

Per default all preflight checks are enabled for all MachineSets including new and existing MachineSets.

The enabled preflight checks can be overwritten with the --machineset-preflight-checks command-line flag.

It is also possible to opt-out of one or all of the preflight checks on a per MachineSet basis by specifying a

comma-separated list of the preflight checks via the machineset.cluster.x-k8s.io/skip-preflight-checks annotation

on the MachineSet.

The ClusterClass feature introduces a new way to create clusters which reduces boilerplate and enables flexible and powerful customization of clusters.

ClusterClass is a powerful abstraction implemented on top of existing interfaces and offers a set of tools and operations to streamline cluster lifecycle management while maintaining the same underlying API.

A ClusterClass becomes more useful and valuable when it can be used to create many Cluster of a similar

shape. The goal of this document is to explain how ClusterClasses can be written in a way that they are

flexible enough to be used in as many Clusters as possible by supporting variants of the same base Cluster shape.

The following example shows a basic ClusterClass. It contains templates to shape the control plane,

infrastructure and workers of a Cluster. When a Cluster is using this ClusterClass, the templates

are used to generate the objects of the managed topology of the Cluster.

The following example shows a Cluster using this ClusterClass. In this case a KubeadmControlPlane

with the corresponding DockerMachineTemplate, a DockerCluster and a MachineDeployment with

the corresponding KubeadmConfigTemplate and DockerMachineTemplate will be created. This basic

ClusterClass is already very flexible. Via the topology on the Cluster the following can be configured:

ClusterClass also supports MachinePool workers. They work very similar to MachineDeployments. MachinePools

can be specified in the ClusterClass template under the workers section like so:

As shown above, basic ClusterClasses are already very powerful. But there are cases where

more powerful mechanisms are required. Let’s assume you want to manage multiple Clusters

with the same ClusterClass, but they require different values for a field in one of the

referenced templates of a ClusterClass.

A concrete example would be to deploy Clusters with different registries. In this case,

every cluster needs a Cluster-specific value for .spec.kubeadmConfigSpec.clusterConfiguration.imageRepository

in KubeadmControlPlane. Use cases like this can be implemented with ClusterClass patches.

The following example shows how variables can be defined in the ClusterClass.

A variable definition specifies the name and the schema of a variable and if it is

required. The schema defines how a variable is defaulted and validated. It supports

a subset of the schema of CRDs. For more information please see the godoc .

The variable can then be used in a patch to set a field on a template referenced in the ClusterClass.

The selector specifies on which template the patch should be applied. jsonPatches specifies which JSON

patches should be applied to that template. In this case we set the imageRepository field of the

KubeadmControlPlaneTemplate to the value of the variable imageRepository. For more information

please see the godoc .

After creating a ClusterClass with a variable definition, the user can now provide a value for

the variable in the Cluster as in the example below.

The controller needs to generate names for new objects when a Cluster is getting created

from a ClusterClass. These names have to be unique for each namespace. The naming

strategy enables this by concatenating the cluster name with a random suffix.

It is possible to provide a custom template for the name generation of ControlPlane, MachineDeployment

and MachinePool objects.

This section will explain more advanced features of ClusterClass patches.

If you want to use many variations of MachineDeployments in Clusters, you can either define

a MachineDeployment class for every variation or you can define patches and variables to

make a single MachineDeployment class more flexible. The same applies for MachinePools.

In addition to variables specified in the ClusterClass, the following builtin variables can be

referenced in patches:

Builtin variables can be used to dynamically calculate image names. The version used in the patch

will always be the same as the one we set in the corresponding MachineDeployment or MachinePool

(works the same way with .builtin.controlPlane.version).

Variables can also be objects, maps and arrays. An object is specified with the type object and

by the schemas of the fields of the object. A map is specified with the type object and the schema

of the map values. An array is specified via the type array and the schema of the array items.

Objects, maps and arrays can be used in patches either directly by referencing the variable name,

or by accessing individual fields. For example:

Complex variables can be used to make references in templates configurable, e.g. the identityRef used in AzureCluster.

Of course it’s also possible to only make the name of the reference configurable, including restricting the valid values

to a pre-defined enum.

Even if OpenAPI schema allows defining free form objects, e.g.

User should be aware that the lack of the validation of users provided data could lead to problems

when those values are used in patch or when the generated templates are created (see e.g.

6135 ).

As a consequence we recommend avoiding this practice while we are considering alternatives to make

it explicit for the ClusterClass authors to opt in this feature, thus accepting the implied risks.

We already saw above that it’s possible to use variable values in JSON patches. It’s also

possible to calculate values via Go templating or to use hard-coded values.

Templates can be used to implement defaulting behavior during JSON patch value calculation. This can be used if the simple

constant default value which can be specified in the schema is not enough.

Patches can also be conditionally enabled. This can be done by configuring a Go template via enabledIf.

The patch is then only applied if the Go template evaluates to true. In the following example the httpProxy

patch is only applied if the httpProxy variable is set (and not empty).

Hard-coded values can be used to test the impact of a patch during development, gradually roll out patches, etc. .

A boolean variable can be used to enable/disable a patch (or “feature”). This can have opt-in or opt-out behavior

depending on the default value of the variable.

Of course the same is possible by adding a boolean variable to a configuration object.

Builtin variables can be leveraged to apply a patch only for a specific Kubernetes version.

In some cases the ClusterClass authors want a patch to be computed according to the Kubernetes version in use.

While this is not a problem “per se” and it does not differ from writing any other patch, it is important

to keep in mind that there could be different Kubernetes version in a Cluster at any time, all of them

accessible via built in variables:

This info should provide the bases for developing version-aware patches, allowing the patch author to determine when a

patch should adapt to the new Kubernetes version by choosing one of the above variables. In practice the

following rules applies to the most common use cases:

Sometimes users need to define variables to be used by version-aware patches, and in this case it is important

to keep in mind that there could be different Kubernetes versions in a Cluster at any time.

A simple approach to solve this problem is to define a map of version-aware variables, with the key of each item

being the Kubernetes version. Patch could then use the proper builtin variables as a lookup entry to fetch

the corresponding values for the Kubernetes version in use by each object.

As a consequence ClusterClass authors should pay special attention when the following

conditions apply in order to prevent errors when a patch is applied:

A workaround in this particular case is to create the array in the patch instead of adding to the non-existing one.

When creating the slice, existing values would be overwritten so this should only be used when it does not exist.

The following example shows both cases to consider while writing a patch for adding a value to a slice.

This patch targets to add a file to the files slice of a KubeadmConfigTemplate which has omitempty set.

When planning a change to a ClusterClass, users should always take into consideration

how those changes might impact the existing Clusters already using the ClusterClass, if any.

There are two strategies for defining how a ClusterClass change rolls out to existing Clusters:

The first strategy is the recommended choice for people starting with ClusterClass; it

requires the users to create a new ClusterClass with the expected changes, and then

rebase each Cluster to use the newly created ClusterClass.

By splitting the change to the ClusterClass and its rollout

to Clusters into separate steps the user will reduce the risk of introducing unexpected

changes on existing Clusters, or at least limit the blast radius of those changes

to a small number of Clusters already rebased (in fact it is similar to a canary deployment).

The second strategy listed above instead requires changing a ClusterClass “in place”, which can

be simpler and faster than creating a new ClusterClass. However, this approach

means that changes are immediately propagated to all the Clusters already using the

modified ClusterClass. Any operation involving many Clusters at the same time has intrinsic risks,

and it can impact heavily on the underlying infrastructure in case the operation triggers

machine rollout across the entire fleet of Clusters.

However, regardless of which strategy you are choosing to implement your changes to a ClusterClass,

please make sure to:

Templates are an integral part of a ClusterClass, and thus the same considerations

described in the previous paragraph apply. When changing

a template referenced in a ClusterClass users should also always plan for how the

change should be propagated to the existing Clusters and choose the strategy that best

suits expectations.

Rebasing is an operational practice for transitioning a Cluster from one ClusterClass to another,

and the operation can be triggered by simply changing the value in Cluster.spec.topology.class.

When changing a ClusterClass, the system validates the required changes according to

a set of compatibility rules to prevent changes which would lead to a non-functional

Cluster, e.g. changing the InfrastructureProvider from AWS to Azure.

If the proposed changes are evaluated as dangerous, the operation is rejected.

Some general notes that can help you to understand what you should

expect when planning your ClusterClass changes:

The following table documents the effects each ClusterClass change can have on a Cluster;

Similar considerations apply to changes introduced by changes in Cluster.Topology or by

changes introduced by patches.

NOTE: for people used to operating Cluster API without Cluster Class, it could also help to keep in mind that the

underlying objects like control plane and MachineDeployment act in the same way with and without a ClusterClass.

The topology reconciler enforces values defined in the ClusterClass templates into the topology

owned objects in a Cluster.

A corollary of the behaviour described above is that it is technically possible to change fields in the object

which are not derived from the templates and patches, but we advise against using the possibility

or making ad-hoc changes in generated objects unless otherwise needed for a workaround. It is always

preferable to improve ClusterClasses by supporting new Cluster variants in a reusable way.

Using a managed topology the operation to upgrade a Kubernetes cluster is a one-touch operation.

Let’s assume we have created a CAPD cluster with ClusterClass and specified Kubernetes v1.21.2 (as documented in the Quick Start guide ). Specifying the version is done when running clusterctl generate cluster. Looking at the cluster, the version of the control plane and the MachineDeployments is v1.21.2.

The upgrade will take some time to roll out as it will take place machine by machine with older versions of the machines only being removed after healthy newer versions come online.

After a few minutes the upgrade will be complete and the output will be similar to:

When using a managed topology scaling of MachineDeployments, both up and down, should be done through the Cluster topology.

Assume we have created a CAPD cluster with ClusterClass and Kubernetes v1.23.3 (as documented in the Quick Start guide ). Initially we should have a MachineDeployment with 3 replicas. Running

We can scale up or down this MachineDeployment through the Cluster object by changing the replicas field under /spec/topology/workers/machineDeployments/0/replicas

The 0 in the path refers to the position of the target MachineDeployment in the list of our Cluster topology. As we only have one MachineDeployment we’re targeting the first item in the list under /spec/topology/workers/machineDeployments/.

As well as scaling a MachineDeployment, Cluster operators can edit the labels and annotations applied to a running MachineDeployment using the Cluster topology as a single point of control.

MachineDeployments in a managed Cluster are defined in the Cluster’s topology. Cluster operators can add a MachineDeployment to a living Cluster by adding it to the cluster.spec.topology.workers.machineDeployments field.

Assume we have created a CAPD cluster with ClusterClass and Kubernetes v1.23.3 (as documented in the Quick Start guide ). Initially we should have a single MachineDeployment with 3 replicas. Running

A new MachineDeployment can be added to the Cluster by adding a new MachineDeployment spec under /spec/topology/workers/machineDeployments/. To do so we can patch our Cluster with:

When using a managed topology scaling of ControlPlane Machines, where the Cluster is using a topology that includes ControlPlane MachineInfrastructure, should be done through the Cluster topology.

As well as scaling a ControlPlane, Cluster operators can edit the labels and annotations applied to a running ControlPlane using the Cluster topology as a single point of control.

A ClusterClass can use variables and patches in order to allow flexible customization of Clusters derived from a ClusterClass. Variable definition allows two or more Cluster topologies derived from the same ClusterClass to have different specs, with the differences controlled by variables in the Cluster topology.

Assume we have created a CAPD cluster with ClusterClass and Kubernetes v1.23.3 (as documented in the Quick Start guide ). Our Cluster has a variable etcdImageTag as defined in the ClusterClass. The variable is not set on our Cluster. Some variables, depending on their definition in a ClusterClass, may need to be specified by the Cluster operator for every Cluster created using a given ClusterClass.

In order to specify the value of a variable all we have to do is set the value in the Cluster topology.

In order to run a different version of etcd in new ControlPlane machines - the part of the spec this variable sets - change the value using the below patch:

Note: Changing the etcd version may have unintended impacts on a running Cluster. For safety the cluster should be reapplied after running the above variable patch.

To perform more significant changes using a Cluster as a single point of control, it may be necessary to change the ClusterClass that the Cluster is based on. This is done by changing the class referenced in /spec/topology/class.

Users should always aim at ensuring the stability of the Cluster and of the applications hosted on it while

using spec.topology as a single point of control for making changes to the objects that are part of the Cluster.

There are some special considerations for ClusterClass regarding Cluster API upgrades when the upgrade includes a bump

of the apiVersion of infrastructure, bootstrap or control plane provider CRDs.

The recommended approach is to first upgrade Cluster API and then update the apiVersions in the ClusterClass references afterwards.

By following above steps, there won’t be any disruptions of the reconciliation as the Cluster topology controller is able to reconcile the Cluster

even with the old apiVersions in the ClusterClass.

Note: The apiVersions in ClusterClass cannot be updated before Cluster API because the new apiVersions don’t exist in

the management cluster before the Cluster API upgrade.

In general the Cluster topology controller always uses exactly the versions of the CRDs referenced in the ClusterClass.

This means in the following example the Cluster topology controller will always use v1beta1 when reconciling/applying

patches for the infrastructure ref, even if the DockerClusterTemplate already has a v1beta2 apiVersion.

The Runtime SDK feature provides an extensibility mechanism that allows systems, products, and services built on top of Cluster API to hook into a workload cluster’s lifecycle.

As a developer building systems on top of Cluster API, if you want to hook into the Cluster’s lifecycle via

a Runtime Hook, you have to implement a Runtime Extension handling requests according to the

OpenAPI specification for the Runtime Hook you are interested in.

Runtime Extensions by design are very powerful and flexible, however given that with great power comes

great responsibility, a few key consideration should always be kept in mind (more details in the following sections):

Please note that following similar practices is already commonly accepted in the Kubernetes ecosystem for

Kubernetes API server admission webhooks. Runtime Extensions share the same foundation and most of the same

considerations/concerns apply.

As mentioned above as a developer building systems on top of Cluster API, if you want to hook in the Cluster’s

lifecycle via a Runtime Extension, you have to implement an HTTPS server handling a discovery request and a set

of additional requests according to the OpenAPI specification for the Runtime Hook you are interested in.

The following shows a minimal example of a Runtime Extension server implementation:

Please note that a Runtime Extension server can serve multiple Runtime Hooks (in the example above

BeforeClusterCreate and BeforeClusterUpgrade) at the same time. Each of them are handled at a different path, like the

Kubernetes API server does for different API resources. The exact format of those paths is handled by the server

automatically in accordance to the OpenAPI specification of the Runtime Hooks.

Please note that Cluster API is only able to enforce the correct request and response types as defined by a Runtime Hook version.

Developers are fully responsible for all other elements of the design of a Runtime Extension implementation, including:

When using Golang the Runtime Extension developer can benefit from the following packages (provided by the

sigs.k8s.io/cluster-api module) as shown in the example above:

While writing a Runtime Extension the following important guidelines must be considered:

Runtime Extension processing adds to reconcile durations of Cluster API controllers. They should respond to requests

as quickly as possible, typically in milliseconds. Runtime Extension developers can decide how long the Cluster API Runtime

should wait for a Runtime Extension to respond before treating the call as a failure (max is 30s) by returning the timeout

during discovery. Of course a Runtime Extension can trigger long-running tasks in the background, but they shouldn’t block

synchronously.

Runtime Extension failure could result in errors in handling the workload clusters lifecycle, and so the implementation

should be robust, have proper error handling, avoid panics, etc. Failure policies can be set up to mitigate the

negative impact of a Runtime Extension on the Cluster API Runtime, but this option can’t be used in all cases

(see Error Management ).

A Runtime Hook can be defined as “blocking” - e.g. the BeforeClusterUpgrade hook allows a Runtime Extension

to prevent the upgrade from starting. A Runtime Extension registered for the BeforeClusterUpgrade hook

can block by returning a non-zero retryAfterSeconds value. Following consideration apply:

Detailed description of what “blocking” means for each specific Runtime Hooks is documented case by case

in the hook-specific implementation documentation (e.g. Implementing Lifecycle Hook Runtime Extensions ).

It is recommended that Runtime Extensions should avoid side effects if possible, which means they should operate

only on the content of the request sent to them, and not make out-of-band changes. If side effects are required,

rules defined in the following sections apply.

An idempotent Runtime Extension is able to succeed even in case it has already been completed before (the Runtime

Extension checks current state and changes it only if necessary). This is necessary because a Runtime Extension

may be called many times after it already succeeded because other Runtime Extensions for the same hook may not

succeed in the same reconcile.

A practical example that explains why idempotence is relevant is the fact that extensions could be called more

than once for the same lifecycle transition, e.g.

Each Runtime Extension should accomplish its task without depending on other Runtime Extensions. Introducing

dependencies across Runtime Extensions makes the system fragile, and it is probably a consequence of poor

“Separation of Concerns” between extensions.

A deterministic Runtime Extension is implemented in such a way that given the same input it will always return

the same output.

Some Runtime Hooks, e.g. like external patches, might explicitly request for corresponding Runtime Extensions

to support this property. But we encourage developers to follow this pattern more generally given that it fits

well with practices like unit testing and generally makes the entire system more predictable and easier to troubleshoot.

RuntimeExtension authors should be aware that error messages are surfaced as conditions in Kubernetes resources

and recorded in Cluster API controller’s logs. As a consequence:

To register your runtime extension apply the ExtensionConfig resource in the management cluster, including your CA

certs, ClusterIP service associated with the app and namespace, and the target namespace for the given extension. Once

created, the extension will detect the associated service and discover the associated Hooks. For clarification, you can

check the status of the ExtensionConfig. Below is an example of ExtensionConfig -

Settings can be added to the ExtensionConfig object in the form of a map with string keys and values. These settings are

sent with each request to hooks registered by that ExtensionConfig. Extension developers can implement behavior in their

extensions to alter behavior based on these settings. Settings should be well documented by extension developers so that

ClusterClass authors can understand usage and expected behaviour.

Settings can be provided for individual external patches by providing them in the ClusterClass .spec.patches[*].external.settings.

This can be used to overwrite settings at the ExtensionConfig level for that patch.

In case a Runtime Extension returns an error, the error will be handled according to the corresponding failure policy

defined in the response of the Discovery call.

Additional considerations about errors that apply only to a specific Runtime Hook will be documented in the hook-specific

implementation documentation.

Make sure to add the ExtensionConfig object to the YAML manifest used to deploy the runtime extensions (see Extensionsconfig for more details).

After you implemented and deployed a Runtime Extension you can manually test it by sending HTTP requests.

This can be for example done via kubectl:

Notably, the Cluster API user experience remains the same as of today no matter of the in-place update feature is enabled

or not e.g. in order to trigger a MachineDeployment rollout, you have to rotate a template, etc.

Users should care ONLY about the desired state (as of today).

Cluster API is responsible to choose the best strategy to achieve desired state, and with the introduction of

update extensions, Cluster API is expanding the set of tools that can be used to achieve the desired state.

If external update extensions can not cover the totality of the desired changes, CAPI will fall back to Cluster API’s default,

immutable rollouts.

Cluster API will be also responsible to determine which Machine/MachineSet should be updated, as well as to handle rollout

options like MaxSurge/MaxUnavailable. With this regard:

In summary, Runtime Extensions are components that should be designed, written and deployed with great caution given

that they can affect the proper functioning of the Cluster API runtime. A poorly implemented Runtime Extension could

potentially block updates.

For additional details about the OpenAPI spec of the upgrade plan hooks, please download the runtime-sdk-openapi.yamlSwagger UI .

This hook is called by KCP when performing the “can update in-place” for a control plane machine.

This hook is called by the MachineDeployment controller when performing the “can update in-place” for all the Machines controlled by

a MachineSet.

This hook is called by the Machine controller when performing the in-place updates for a Machine.

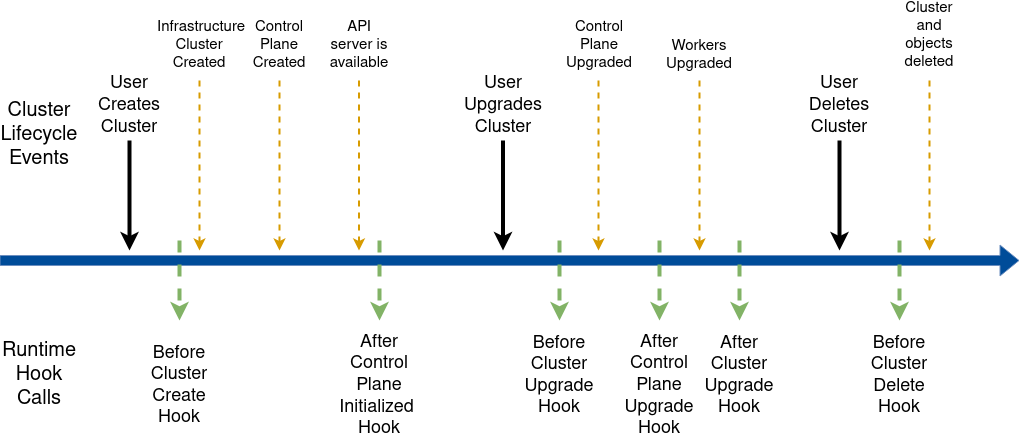

The lifecycle hooks allow hooking into the Cluster lifecycle. The following diagram provides an overview:

In summary, Runtime Extensions are components that should be designed, written and deployed with great caution given

that they can affect the proper functioning of the Cluster API runtime. A poorly implemented Runtime Extension could

potentially block lifecycle transitions from happening.

For additional details about the OpenAPI spec of the lifecycle hooks, please download the runtime-sdk-openapi.yamlSwagger UI .

This hook is called after the Cluster object has been created by the user, immediately before all the objects which

are part of a Cluster topology(*) are going to be created. Runtime Extension implementers can use this hook to

determine/prepare add-ons for the Cluster and block the creation of those objects until everything is ready.

(*) The objects which are part of a Cluster topology are the infrastructure Cluster, the Control Plane, the

MachineDeployments and the templates derived from the ClusterClass.

This hook is called after the Control Plane reports that the control plane is initialized, which means the API server can accept requests.

This usually happens sometime during the first CP machine provisioning or immediately thereafter.

Runtime Extension implementers can use this hook to execute tasks, for example component installation on workload clusters, that are only

possible once the Control Plane is available. This hook does not block any further changes to the Cluster.

Note: While the upgrade is blocked changes made to the Cluster Topology will be delayed propagating to the underlying

objects while the object is waiting for upgrade. Example: modifying ControlPlane/MachineDeployments (think scale up),

or creating new MachineDeployments will be delayed until the target ControlPlane/MachineDeployment is ready to pick up the upgrade.

This ensures that the ControlPlane and MachineDeployments do not perform a rollout prematurely while waiting to be rolled out again

for the version upgrade (no double rollouts).

This also ensures that any version specific changes are only pushed to the underlying objects also at the correct version.

This hook is called before a new version is propagated to the control plane object, which happens as many times

as defined by the upgrade plan.

Runtime Extension implementers can use this hook to execute pre-upgrade add-on tasks and block upgrades of the ControlPlane.

This hook is called after the control plane has been upgraded to the version specified in spec.topology.version

or to an intermediate version in the upgrade plan and:

Runtime Extension implementers can use this hook to execute post-upgrade add-on tasks and block upgrades to the next

version of the control plane or to workers until everything is ready.

Note: While the MachineDeployments upgrade is blocked changes made to existing MachineDeployments and creating new MachineDeployments

will be delayed while the object is waiting for upgrade. Example: modifying MachineDeployments (think scale up),

or creating new MachineDeployments will be delayed until the target MachineDeployment is ready to pick up the upgrade.

This ensures that the MachineDeployments do not perform a rollout prematurely while waiting to be rolled out again for the version upgrade (no double rollouts).

This also ensures that any version specific changes are only pushed to the underlying objects also at the correct version.

This hook is called before a new version is propagated to workers. Runtime Extension implementers

can use this hook to execute pre-upgrade add-on tasks and block upgrades of Workers.

This hook is called after all the workers have been upgraded to the version specified in spec.topology.version

or to an intermediate version in the upgrade plan, and:

This hook is called after the Cluster, control plane and workers have been upgraded to the version specified in

spec.topology.version. Runtime Extensions implementers can use this hook to execute post-upgrade add-on tasks.

This hook blocks new upgrades to start until it is completed.

This hook is called after the Cluster deletion has been triggered by the user and immediately before the topology

of the Cluster is going to be deleted. Runtime Extension implementers can use this hook to execute

cleanup tasks for the add-ons and block deletion of the Cluster and descendant objects until everything is ready.

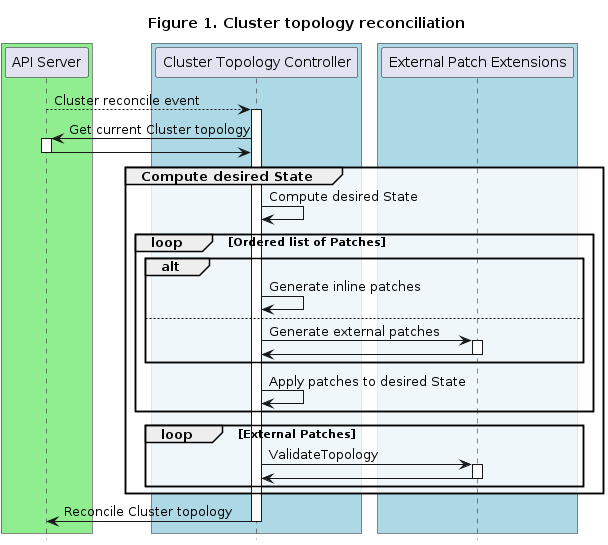

Three different hooks are called as part of Topology Mutation - two in the Cluster topology reconciler and one in the ClusterClass reconciler.

In summary, Runtime Extensions are components that should be designed, written and deployed with great caution given

that they can affect the proper functioning of the Cluster API runtime. A poorly implemented Runtime Extension could

potentially block topology reconcile from happening.

For additional details about the OpenAPI spec of the topology mutation hooks, please download the runtime-sdk-openapi.yamlSwagger UI .

The DiscoverVariables hook can be used to supply variable definitions for use in external patches. These variable definitions are added to

the status of any applicable ClusterClasses. Clusters using the ClusterClass can then set values for those variables.

External variable definitions are discovered by calling the DiscoverVariables runtime hook. This hook is called from the ClusterClass reconciler.

Once discovered the variable definitions are validated and stored in ClusterClass status.

Variable definitions can be inline in the ClusterClass or from any number of external DiscoverVariables hooks. The source